关于ffmpeg硬解码,其实就是不使用ffmpeg自身的解码器,而是从系统查找硬解码器,在Android上就是通过反射调用系统的解码器中间件MediaCodec。网络上关于ffmpeg硬解码的文章很多,ffmpeg官方demo里面也有很详细的写法。但是这些公开的资料,大部分都是针对播放视频文件(AVCC),极少针对AnnexB视频流的硬解码。

众所周知,Android中使用MediaCodec硬解码前,需要先通过MediaFormat设置视频流的参数,之后才能正确开启解码器解码。ffmpeg中,如果是从视频文件硬解码,ffmpeg可以自动从视频文件头读取包含视频的sps、pps等信息等的extradata,用于设置AVCodecContext->extradata和AVCodecContext->extradata_size,从而正确打开硬件解码器avcodec_open2,AVCodecContext->extradata中存储的就是sps、pps、width、height等MediaFormat中存储的信息。但是如果是AnnexB的视频流,没有文件头来读取视频参数来设置extradata,就会导致avcodec_open2失败,相当于Java中使用MediaCodec前,没有通过MediaFormat设置参数。

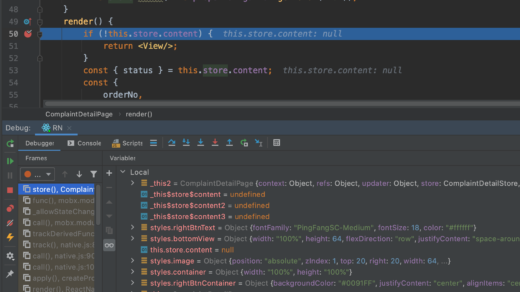

1. 硬解码

AnnexB视频流虽然没有文件头,但是每一帧有包含sps和pps信息的nalu,可以在打开解码器前,先通过av_read_frame拆出一个帧的AVPacket,解出AVPacket中的extradata,填充进AVCodecContext,来实现成功avcodec_open2。

int extract_extradata(AVCodecContext *pCodecCtx, AVPacket *packet, uint8_t **extradata_dest, int *extradata_size_dest)

{

const AVBitStreamFilter *bsf;

int ret;

if( (bsf = av_bsf_get_by_name("extract_extradata")) == NULL)

{

LOGD("failed to get extract_extradata bsf\n");

return 0;

}

printf("\nfound bsf\n");

AVBSFContext *bsf_context;

if( (ret=av_bsf_alloc(bsf, &bsf_context) ) < 0)

{

LOGD("failed to alloc bsf contextx\n");

return 0;

}

printf("alloced bsf context\n");

if( (ret=avcodec_parameters_from_context(bsf_context->par_in, pCodecCtx) ) < 0)

{

LOGD("failed to copy parameters from contextx\n");

av_bsf_free(&bsf_context);

return 0;

}

printf("copied bsf params\n");

if( (ret = av_bsf_init(bsf_context)) < 0 )

{

LOGD("failed to init bsf contextx\n");

av_bsf_free(&bsf_context);

return 0;

}

printf("initialized bsf context\n");

AVPacket *packet_ref = av_packet_alloc();

if(av_packet_ref(packet_ref, packet) < 0 )

{

LOGD("failed to ref packet\n");

av_bsf_free(&bsf_context);

return 0;

}

//make sure refs are used corectly

//this probably resests packet

if((ret = av_bsf_send_packet(bsf_context, packet_ref)) < 0)

{

LOGD("failed to send packet to bsf\n");

av_packet_unref(packet_ref);

av_bsf_free(&bsf_context);

return 0;

}

printf("sent packet to bsf\n");

int done=0;

while (ret >= 0 && !done) //!h->decoder_ctx->extradata)

{

int extradata_size;

uint8_t *extradata;

ret = av_bsf_receive_packet(bsf_context, packet_ref);

if (ret < 0)

{

if (ret != AVERROR(EAGAIN) && ret != AVERROR_EOF)

{

LOGD("bsf error, not eagain or eof\n");

return 0;

}

continue;

}

extradata = av_packet_get_side_data(packet_ref, AV_PKT_DATA_NEW_EXTRADATA, &extradata_size);

if (extradata)

{

LOGD("got extradata, %d size!\n", extradata_size);

done=1;

*extradata_dest = (uint8_t *) av_mallocz(extradata_size + AV_INPUT_BUFFER_PADDING_SIZE);

memcpy(*extradata_dest, extradata, extradata_size);

*extradata_size_dest = extradata_size;

av_packet_unref(packet_ref);

}

}

av_packet_free(&packet_ref);

av_bsf_free(&bsf_context);

return done;

}

2. 截图JPEG

如果是软解后的YUV420P格式,在通过mjpeg编码器编码成jpeg的时候,可以直接指定输入格式为AV_PIX_FMT_YUVJ420P,此处不是YUV420P而是YUVJ420P的原因是,YUVJ420P的color_range是JPEG,才可以编码JPEG,见https://stackoverflow.com/a/33939577 。那么问题来了,大部分Android设备,以及NVIDIA显卡,硬解码后的YUV,并不是YUV420P,而是NV12,与YUVJ420P之间就不只是color_range的不同,如果强行指定AV_PIX_FMT_YUVJ420P为输入格式,编码的时候会崩溃。所以需要编码JPEG前,先通过sws_scale转换为AV_PIX_FMT_YUVJ420P。

AVFrame *pFrameYUVJ420;

if (pix_fmt != AV_PIX_FMT_YUVJ420P) {

pFrameYUVJ420 = av_frame_alloc();

if (pFrameYUVJ420 == NULL) {

LOGD("Could not allocate video frame: pFrameYUVJ420.");

return -1;

}

// Determine required buffer size and allocate buffer

// buffer中数据就是用于编码的,且格式为YUVJ420

int numBytes = av_image_get_buffer_size(AV_PIX_FMT_YUVJ420P, pFrame->width, pFrame->height,

1);

uint8_t *buffer = (uint8_t *) av_malloc(numBytes * sizeof(uint8_t));

av_image_fill_arrays(pFrameYUVJ420->data, pFrameYUVJ420->linesize, buffer,

AV_PIX_FMT_YUVJ420P,

pFrame->width, pFrame->height, 1);

// 由于解码出来的帧格式不是YUVJ420的,在编码之前需要进行格式转换

struct SwsContext *sws_ctx = sws_getContext(pFrame->width,

pFrame->height,

pix_fmt,

pFrame->width,

pFrame->height,

AV_PIX_FMT_YUVJ420P,

SWS_BILINEAR,

NULL,

NULL,

NULL);

// 格式转换

sws_scale(sws_ctx, (uint8_t const *const *) pFrame->data,

pFrame->linesize, 0, pFrame->height,

pFrameYUVJ420->data, pFrameYUVJ420->linesize);

pFrameYUVJ420->format = AV_PIX_FMT_YUVJ420P;

pFrameYUVJ420->width = pFrame->width;

pFrameYUVJ420->height = pFrame->height;

av_frame_unref(pFrame);

av_free(pFrame);

} else {

pFrameYUVJ420 = pFrame;

}

3. OpenGL渲染

由于软解码出来的YUV420P和硬解码出来的NV12,数据交织方式不同,所以创建的纹理层数不同,使用到的片元着色器也不同。YUV420P需要3层纹理,NV12只需要2层纹理。由于视频播放器一般要同时支持硬解和软解,所以这边着色器就支持了多种格式。

顶点着色器和片元着色器:

//顶点着色器glsl

#define GET_STR(x) #x

static const char *vertexShader = GET_STR(

attribute vec4 aPosition; //顶点坐标

attribute vec2 aTexCoord; //材质顶点坐标

varying vec2 vTexCoord; //输出的材质坐标

void main(){

vTexCoord = vec2(aTexCoord.x,1.0-aTexCoord.y);

gl_Position = aPosition;

}

);

//片元着色器

static const char *fragYUV420P = GET_STR(

precision mediump float; //精度

varying vec2 vTexCoord; //顶点着色器传递的坐标

uniform sampler2D yTexture; //输入的材质(不透明灰度,单像素)

uniform sampler2D uTexture;

uniform sampler2D vTexture;

uniform int u_ImgType;// 1:RGBA, 2:NV21, 3:NV12, 4:I420

void main(){

if(u_ImgType == 1) //RGBA

{

gl_FragColor = texture2D(yTexture, vTexCoord);

}

else if(u_ImgType == 2) //NV21

{

vec3 yuv;

vec3 rgb;

yuv.r = texture2D(yTexture,vTexCoord).r;

yuv.g = texture2D(uTexture,vTexCoord).a - 0.5;

yuv.b = texture2D(uTexture,vTexCoord).r - 0.5;

rgb = mat3(1.0, 1.0, 1.0,

0.0,-0.39465,2.03211,

1.13983,-0.58060,0.0)*yuv;

//输出像素颜色

gl_FragColor = vec4(rgb,1.0);

}

else if(u_ImgType == 3) //NV12

{

vec3 yuv;

vec3 rgb;

yuv.r = texture2D(yTexture,vTexCoord).r;

yuv.g = texture2D(uTexture,vTexCoord).r - 0.5;

yuv.b = texture2D(uTexture,vTexCoord).a - 0.5;

rgb = mat3(1.0, 1.0, 1.0,

0.0,-0.39465,2.03211,

1.13983,-0.58060,0.0)*yuv;

//输出像素颜色

gl_FragColor = vec4(rgb,1.0);

}

else if(u_ImgType == 4) //I420

{

vec3 yuv;

vec3 rgb;

yuv.r = texture2D(yTexture,vTexCoord).r;

yuv.g = texture2D(uTexture,vTexCoord).r - 0.5;

yuv.b = texture2D(vTexture,vTexCoord).r - 0.5;

rgb = mat3(1.0, 1.0, 1.0,

0.0,-0.39465,2.03211,

1.13983,-0.58060,0.0)*yuv;

//输出像素颜色

gl_FragColor = vec4(rgb,1.0);

}

else

{

gl_FragColor = vec4(1.0);

}

}

);

纹理着色:

switch (pCodecCtx->pix_fmt) {

case AV_PIX_FMT_RGBA:

{

glActiveTexture(GL_TEXTURE0);

glBindTexture(GL_TEXTURE_2D, texts[0]);

glTexSubImage2D(GL_TEXTURE_2D,0,0,0,width,height,GL_RGBA,GL_UNSIGNED_BYTE,pFrame->data[0]);

}

break;

case AV_PIX_FMT_NV21:

case AV_PIX_FMT_NV12:

{

//激活第1层纹理,绑定到创建的opengl纹理

glActiveTexture(GL_TEXTURE0);

glBindTexture(GL_TEXTURE_2D,texts[0]);

//替换纹理内容

glTexSubImage2D(GL_TEXTURE_2D,0,0,0,width,height,GL_LUMINANCE,GL_UNSIGNED_BYTE,pFrame->data[0]);

//update UV plane data

glActiveTexture(GL_TEXTURE0+1);

glBindTexture(GL_TEXTURE_2D, texts[1]);

glTexSubImage2D(GL_TEXTURE_2D,0,0,0,width/2,height/2,GL_LUMINANCE_ALPHA,GL_UNSIGNED_BYTE,pFrame->data[1]);

}

break;

case AV_PIX_FMT_YUV420P:

{

//激活第1层纹理,绑定到创建的opengl纹理

glActiveTexture(GL_TEXTURE0);

glBindTexture(GL_TEXTURE_2D,texts[0]);

//替换纹理内容

glTexSubImage2D(GL_TEXTURE_2D,0,0,0,width,height,GL_LUMINANCE,GL_UNSIGNED_BYTE,pFrame->data[0]);

//激活第2层纹理,绑定到创建的opengl纹理

glActiveTexture(GL_TEXTURE0+1);

glBindTexture(GL_TEXTURE_2D,texts[1]);

//替换纹理内容

glTexSubImage2D(GL_TEXTURE_2D,0,0,0,width/2,height/2,GL_LUMINANCE,GL_UNSIGNED_BYTE,pFrame->data[1]);

//激活第3层纹理,绑定到创建的opengl纹理

glActiveTexture(GL_TEXTURE0+2);

glBindTexture(GL_TEXTURE_2D,texts[2]);

//替换纹理内容

glTexSubImage2D(GL_TEXTURE_2D,0,0,0,width/2,height/2,GL_LUMINANCE,GL_UNSIGNED_BYTE,pFrame->data[2]);

}

break;

}

4. 编码成MP4

编码成MP4后如果发现无法播放,或者只有VLC可以播放,同时手机或者pc或者mac无法对MP4文件加载出缩略图,可能是MP4文件头不对,关键在于要设置输出流AVStream->codecpar的extradata和extradata_size,以及AV_CODEC_FLAG_GLOBAL_HEADER。软解的话,ffmpeg自动给AVCodecContext加上extradata和extradata_size了,这边可以直接从AVCodecContext中读取,再拷贝给AVStream->codecpar,硬解的话,需要按照第一节中的方式,先从一个AVPacket中提取extradata和extradata_size,再拷贝给AVStream->codecpar。

AVStream *in_stream = ifmt_ctx_v->streams[i];

AVStream *out_stream = avformat_new_stream(ofmt_ctx, in_stream->codec->codec);

videoindex_v=i;

if (!out_stream) {

LOGD( "Failed allocating output stream");

ret = AVERROR_UNKNOWN;

goto end;

}

videoindex_out=out_stream->index;

//Copy the settings of AVCodecContext

ret = avcodec_parameters_copy(out_stream->codecpar, in_stream->codecpar);

// extra_data to write file header

out_stream->codecpar->extradata = (uint8_t *) av_mallocz(pCodecCtx->extradata_size + AV_INPUT_BUFFER_PADDING_SIZE);

memcpy(out_stream->codecpar->extradata, pCodecCtx->extradata, pCodecCtx->extradata_size);

out_stream->codecpar->extradata_size = pCodecCtx->extradata_size;

LOGD("got extradata, %d size!\n", out_stream->codecpar->extradata_size);

if (ret < 0) {

LOGD( "Failed to copy context from input to output stream codec context");

goto end;

}

out_stream->codec->codec_tag = 0;

if (ofmt_ctx->oformat->flags & AVFMT_GLOBALHEADER) {

out_stream->codec->flags |= AV_CODEC_FLAG_GLOBAL_HEADER;

LOGD( "AV_CODEC_FLAG_GLOBAL_HEADER");

}

参考文献:

https://blog.csdn.net/yue_huang/article/details/75126155

https://blog.csdn.net/special00/article/details/82533768

https://github.com/bmegli/hardware-video-decoder/issues/5#issuecomment-469857880

https://github.com/bmegli/hardware-video-decoder/blob/2b9bf0f053/hvd.c

https://stackoverflow.com/a/33939577

https://blog.csdn.net/Kennethdroid/article/details/108737936

https://www.jianshu.com/p/65d926ba1f1c/

https://qincji.gitee.io/2021/02/01/afplayer/03_mediacodec/index.html